Now that Twitter has effectively become “the Daily Horror app”, I’ve gone

back to reading conscientiously, and the book I am currently engrossed by is

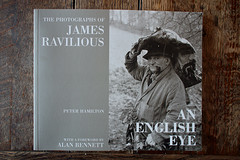

Electronic Dreams: How 1980s Britain learned to love the computer

by Tom Lean. This is one of the few popular history books I’ve read where

I can say “I was there”, and the resultant flood of memories has prompted

this post that will probably be the nerd equivalent of Jumpers For

Goalposts. (But it definitely won’t be about football, so it will

be an improvement in one respect at least.)

The Sinclair ZX Spectrum came out in 1982, just as I turned 12. I’d been

aware of home computers since at least the appearance of the ZX80 two

years earlier, and can

recall playing about with the ZX81 and similar microcomputers in stores:

“Playtime’s over, son,” rumbled the salesman in Dixons, flipping the power

switch as I sat crosslegged in front of their demo VIC-20 about to start

my second round of Galaxians. A week

previously, he’d been happy enough to give me his full patter on it, but

he was clearly now aggrieved that I hadn’t yet nagged a parent

into buying one - for the very good reason that they simply dismissed it

as too expensive, which it was at £200 (and it needed its own

proprietary tape deck). But yes, I wanted one. How badly? I’d already hung

around with some of the geekier lads in the year above, playing with the

school’s Commodore PET 2001 in the classroom during breaks. One of the

Chemistry teachers owned a Sharp microcomputer, which was a rare possession

at the time, and he let a select few of us tentatively prod at it during a

couple of lunch hours, although we had to endure some formalised teaching

of programming from him (while we mainly wanted to make these new wonder

machines print silly messages or rude words). Those early encounters had

led to borrowing a paperback that covered basic BASIC programming from my

local library, which I studied closely. Following on from an existing

interest in electronics, this was a natural progression and yet pleasantly

diverting: the application of rigorous logic within an entirely conceptual

frame. But I still lacked a ready means of trying any of it out.

Feeding the fever

In the meantime, I

obsessively devoured the burgeoning hobby press for this growing

interest - like most hobbies, debating and comparing the various ways to

spend money, even if only in your own mind, was half the fun.

The magazines were odd compendia of product news, reviews, tips and

tutorial articles at the time, each covering a smorgasbord of micro models

and companies ranging from well-known international brands to local

independent retailers and one man band software sellers. As no one system

had yet gained significant market share, they couldn’t favour particular

products so had to write about the entire gallimaufry of competing brands and

every quirky little plastic box on sale (and quite a few that, while

well-known, weren’t available yet and actually would never appear). Your

Computer magazine, an

entirely representative example of the milieu (you can find scans of

old editions online),

ran a series on porting programs between various BASIC dialects, alongside

pieces

on ZX81 machine code, the month-by-month development of a database program

for the BBC Micro and several pages of type-in programs, including reams

of hex numbers for the machine code ones. It seems faintly incredible

now that people used to sit at their cherished devices tapping in streams of

opaque hieroglyphs, and further hours trying fruitlessly to identify the

mistakes in them (some of which could be down to not merely a miskeyed

entry but actual printing errors, meaning they had almost no hope of ever

making it work). One featured program was for a flight simulator which,

over the course of a very long evening, my friend, his father and I could

not get to work on their borrowed Acorn Atom - but we stuck at it

because we really wanted to see that flight sim.

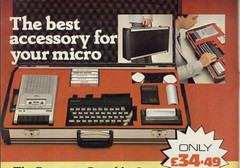

There were multipage promotions from the major makers, extolling the virtues

of their particular models, and sincere price promises from the newly

minted Thatcherite wide boys hastily opening slick retail premises to

shift boxes (although if you

didn’t have the readies to hand then of course, “playtime’s over, son”),

alongside quarter page ads placed by the archetypal lone bedroom

‘entrepreneurs’ bashing out ten “puzzle games” on a C30 cassette that

faithfully promised a world of entertainment from some fairly rudimentary

text handling. One company urged the new

ZX81 owner to “stop playing boring games” and use their advanced new

technology to run a “database filing system” for sheer unadulterated

thrills instead. But part of what the magazines had uncovered was the

enormous latent demand by ordinary people to own multifunctional devices

that they could direct to their own purposes - to do things that only the

malleability of software allows (today we have vast app stores and composable

web services, and you don’t need to learn how to code). Clearly, nobody in

their right mind would want to run a database off cassette-based storage,

yet individuals were beginning to do that in preference to tedious or

unworkable manual solutions. This was a step beyond previous consumer

product booms such as hifi, although it had something in common with the

DIY craze that arose in the early seventies.

‘I just had this instinctive feeling that nothing this cool could be useless.’ (Guy Kewney)

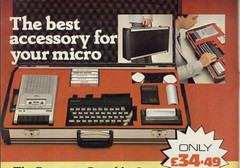

The height of portable computing

Over time, this charming amateurishness gave way to a wide range of titles

each targeting both a specific system and a particular readership. The

market for ‘thinly aimed at teenage boys’ was especially large; of

magazines such as

“Load Runner”,

“Big K” and “Crash”, only the first named was upfront enough to call itself a

computer comic. Those of us who read too many of these publications had a

distressing tendency to believe that talking like a computer - dropping words

like ‘input’ and ‘output’ into normal conversation - did not somehow

beg for derision. Even the prevailing use of BASIC (boo, hiss) in the

program listings and tutorials lent an undercurrent of exotic

Americanisation and an unfamiliar entrepreneurial spirit with the liberal

use of the dollar sign and ruthless categorisation of data as ‘strings’ or

‘integers’.

Yet still the entry price for hopping onboard this craze appeared to start

upwards of two hundred pounds (at least if you wanted to play - or, being

generous, write - games in colour). Until May 1982, that is,

which happily coincided with my twelfth birthday.

A 16K ZX Spectrum at £125 was just about within the realms of

parental indulgence, if I played the usual ploys of “I’ll have it for

birthday and Christmas!” and “I’ll never ask for anything again!”

[Narrator: “Neither of these were true.”] It took seemingly forever to

arrive, though it might actually have been anything from six weeks to

three months (Sinclair had massive shipping delays at first, and I suspect

it also took a month or two initially to reassure my dad that his money

wouldn’t just disappear).

I filled the time covering pages of notepads with my own fledgling BASIC

programs (partly because I still hadn’t grasped the use of FOR..NEXT and

was thus unrolling all my loops in long hand) and then, when the coveted

Spectrum still hadn’t arrived, I resorted to ‘entering’ them on the full

page photo in the Sinclair brochure “to practice my typing”. I was

jonesing for my fix bad. Around this time, I recall buying the

August 1982 issue of Your Computer as “beach reading” (sic) for a day out

at Rhyl, to satiate my gnawing impatience at the continued wait. (Why yes,

I was a pale and slight adolescent forever getting sand kicked in my

face.)

While I was waiting for my personal holy grail to arrive, an entire

panoply of low

priced, ‘fully-featured’ competing microcomputer models flooded on to the

market to exploit the demand newly exposed by Sinclair’s latest success.

I’m sure that at least a few owners of the Video Genie, Newbrain, Oric-1,

Jupiter Ace or Atari 400 were pleased with their purchases, but the

companies behind them might as well have farted into a paper bag for all

the lasting impact they would have on the home computing scene in the UK.

By the end of the year, it was clear that there were only three main

players in town - Sinclair, Commodore and Acorn - and the nascent software

industry necessarily coalesced around them. (Jupiter devoted most

of their adverts to justifying the novel use of FORTH in the Ace,

as if writing code rather than running it was the main selling point.

Nevertheless, the impression persisted that if your parents bought you one of

these esoteric outliers, they probably also ate muesli and banned you from

watching ITV.)

Home computing madness

Eventually, my ZX Spectrum arrived. I can’t recall exactly when it came

or how it felt, but I suspect no first time heroin shooter ever matched

the rush of that day. I wrote my little programs and I played the sample

“Breakout” game supplied by Sinclair on their demo cassette endlessly. (I

once wrote an entire database program in a morning - no idea why other

than because I could - and then couldn’t save it because the tape recorder

was faulty. And my mother agonised and then decided not to tell me about

the brand new cassette deck hidden upstairs for Xmas.) A teacher and

fellow enthusiast at school arranged a trip to a regional computer show

for some of us, and for the princely fiver I had scraped together (or

possibly cadged), I bought my first game, Artic’s Gobbleman, an early

Pacman clone that at least hadn’t been written in BASIC and was thus a

‘proper’ program. But in slowly collecting more and more games, I rapidly

discovered the limitations of my basic Spectrum.

‘When you’ve got a computer and you want to make something, you

can do it. You’ve got everything there. And I loved the fact that I

could have an idea and I could immediately put it into action.’

Tom Lean makes the excellent point that computing offered a hobby which

was largely self-contained and theoretically less prone to

‘acquisition syndrome’ than most others. Once you had the computer, almost

everything you could want to do with it was immediately possible, only

requiring your imagination, time and intellectual nous. I was a failed

veteran of both model railwaying and DIY electronics already (shockingly

predictable, I know), pursuits that each demanded endless supplies of

‘stuff you don’t already own’ before you could achieve much.1 But here

at last was a gizmo that was complete in and of itself, that only needed

the application of logic and creative insight to conjure anything I could

think of into existence. Oh, and some extra RAM, because it quickly turned

out there weren’t many games that worked in only 16K. Oh, and a joystick.

And a joystick interface (because the Spectrum hardware was

really minimal). Actually, an endless succession of joysticks (surely

there must be one that will let me win at games?!). And a Microdrive now

they’re available. And maybe a proper keyboard. Or a printer. And an

Assembler program, because I’m bored with BASIC after writing half a dozen

ropey games and I need to learn Z80 machine code to be a games developer.

Although actually, Z80 machine code is reeelly hard, but it’ll be much

easier on an entirely new computer with better graphics and sound, Dad.

(I’ve no idea how I ever perpetrated this last outrageous con to the tune

of a £200 Commodore 64 - rising Eighties middle class affluence

innit - but I went on to repeat it twice more, although at least I used my

own wages and only fooled myself on those later occasions.)

A technical digression

In today’s era of compiled high level languages, Object Orientation, IDEs,

frameworks, toolkits and modern 64 bit platforms, we’ve largely

forgotten how compromised and arcane early microcomputer architectures were.

Clever hacks were creatively employed, and I’m sure for solid reasons,

to produce the capabilities that the market demanded from low end hardware,

but none of them made life easy for assembly language programmers looking

to hit the bare metal to turn out arcade quality games. The ZX Spectrum’s

display memory map was laid out in three sections of eight character rows,

each comprising the top row of pixels for each successive row of

characters in that section of screen, then the next row of pixels for each

character row, etc. (This was quite apart from the

colour layout, which was a sequential array of character squares

superimposed on the hi-res screen - hence the famous ‘attribute clash’

when objects of differing colours met onscreen, since the character space

they shared could only have one assigned foreground colour.) So

that’s a screen split into thirds, each of which is split again into

segmented rows of characters. Imagine trying to animate a game object

moving vertically through that space, one pixel row at a time - in Z80

assembly! In fact, there’s some nifty hexadecimal maths you can use to

do this, but there’s no doubt that the various code explanations are

frankly convoluted

on first glance - as well as subsequent horrified glances. (I tried to

find a link that illustrated the screen layout to explain it better,

but drew a blank. Not surprised really. The best way to understand it is

to observe a loading screen in any emulated Spectrum game.)

Screw this, thinks the aspiring games maker. I’ll get a C64 instead,

they’re meant for gaming. And it’s true, the Commodore 64 had hardware

sprites and a proper sound chip, and you could even program them from

BASIC by POKEing values into memory addresses - in fact, that was the only

way to program them in any language. C64 programmers usually graduated

to assembly language in a very short space of time, because Commodore

BASIC was so awful as to be little different from it. But hold on, the C64

uses banks of memory and so-called ‘shadow RAM’ - its 20K of ROM is

actually overlaid in 4K blocks on the 64K RAM. This is a plus in one sense,

in that you can copy the ROM to the underlying RAM, then switch out that

bank and modify the copy or use the shadow RAM for something else

entirely, but it also adds the complications of managing all these

disconnected bits of memory, not all of which is accessible at once by the

various custom chips. Add to which, it turns out that 6502 assembly is

even more primitive than Z80.2 Good luck, kid! Oh and by the way, the

Internet isn’t generally available yet. You can’t google the answers.

You’ll have to buy these huge thick programmers reference guides and

figure it out.

(Until machine-specific coding books appeared, I regularly saw

recommendations to use generic Z80 or 6502 programmer texts, such as those

by Rodney Zaks, which is a bit like telling someone to learn how to drive

by studying Haynes manuals.)

Needless to say, I ended up owning Toni Baker’s classic Mastering Machine

Code on the ZX Spectrum, the Commodore 64 Programmer’s Reference Guide

and at least two thick system programming references for the Amiga. (You

can find scans of all of these online now, btw, in case you run out

of insomnia meds.) I knew

the lore of programming these systems at a low level almost off by heart

by dint of hours spent poring over such tomes,

but it was entirely theoretical - absolutely none of it ever came to be

applied on a practical level. I didn’t even get round to buying and then

never using an assembler program for the C64. Simply reading about it

became sufficient. Such is the curse of being bright but staggeringly

lazy. (This is the point where most functioning people can write,

“However, at about this time I discovered girls instead.” Yeah, that didn’t

happen either.)

How then, did all this useless esoterica and unapplied erudition benefit

us? Simply, unlike the previous generation, we were the first to generally

understand that computers were not the all-powerful, all-knowing,

‘intelligent’ entities of popular sci-fi - that they were in fact dumb as

rocks. But if you could command the rocks into life and make them

perform the most basic tasks, they would be able to do them much

faster and more accurately than any person. And if you could break any

complex task down into its most elemental steps, it could do that too.

The earliest home computer games were based entirely on the

simplest possible manipulation of screen characters - moving single ASCII

symbols around one block at a time in response to either player

command or some inner brutal logic:

410 IF player_position>alien_position THEN

420 LET alien_position = alien_position-1

430 END IF

Within two or three years, game sprites were obeying the laws of mass,

inertia, gravity and thermodynamics - simulated entirely by

self-taught teenagers working at the lowest level of CPU programming

(though I wasn’t one of them, obviously). I’m not going to point

at modern teens and sneer about vlogging by comparison, as I think that

also demands a similar level of skill in psychology, presentation and

narrative, but this was probably the only time in history at which ‘deep

science’ subjects were broadly comprehended by a significant part of a

generation. This stuff was quite literally rocket science.

Moreover, for me personally, my maths finally started to improve. Content

to bump along with the minimal adequate effort in a middle set, I

suddenly decided maths might actually be useful in future and began to pay

attention. (I learnt trig from the ZX Spectrum manual before the class

covered it.) Miss B, bless her soul, pushed my lazy arse with extra tuition

to get me moved up to the top set and eventually, O and A Level grades.

(And yes, I work in IT now, although the gulf between what I did on my ZX

Spectrum and what I do now is incomprehensibly vast.)

An utterly typical Sinclair User family

As the magazines began to target specific platforms, I

lapped up all their technical articles and avidly read the

installments of their “programmers diary” features (a genre surely

rivalled only by “Journal of a Chartered Accountant” and “The Librarian’s

Day” for exotic thrills), such as Andrew Braybrook’s wonderful

Birth of a Paradroid

in the otherwise risible Zzap!64.

The newly launched Your Spectrum mag set out their stall early on with a

series of tutorials by

Toni Baker on writing machine code for the Spectrum; prior to this, we’d

had to endure the excruciatingly worthy Sinclair User with

their ‘User of the month’ feature,

about people who’d use their ZX printer to print their shopping lists.3

(Worse, they often put the user on their cover, which was frequently

embarrassing when classmates found it amusing to identify me with the

person in question. The

toddler was

bad enough, but the stick I got for the

Morris Dancer

one - the resemblance was plain, apart from the costume - was mortifying.

But then I was naïve enough to be seen with it openly in school.)

“People could program any of the new machines, but the expectation was

that typical users would just use them as software players.”

The Amiga, when it eventually became generally available, was potentially easier to develop software on, as it had custom

chips that could perform a lot of the work independently, a proper 16 bit

architecture and a well-developed set of OS libraries to handle the basic

tasks - but by that point, the complexity and expectations of the typical

game were almost

beyond what one person could hope to satisfy. Although this generation of

hardware would be the peak of the home computing market, it also marked

the slow death of the amateur ethos that had lingered from the

early days. From here on, the market split into gaming consoles and

desktop PCs, both of which were basically appliances for running purchased

software. (Those who still enjoyed typing stuff in were by now

discovering UNIX at university, which would lead them to Linux so they could

run it at home.) In the face of the new 16 bit machines, the makers of the

older 8 bit computers attempted to launch enhanced versions - the

Commodore 128, Spectrum+, etc - but found themselves hampered by the

petrified architecture of their ancestors and the need to maintain

compatibility with the existing software base. This was before any

thought of “graceful degradation” or API versioning (or, indeed, APIs),

and so the legacy system was usually bolted on as a ‘compatibility mode’

in a way that even Dr Frankenstein would think inelegant.

Popularity, or not

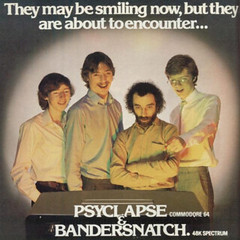

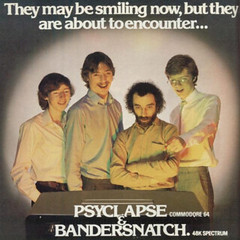

Imagine’s programmers review their employer’s long term outlook

To read Lean’s history, one might think that home computing was a

pervasive craze among all youngsters in the early 1980s. It was significantly

popular - mainly for the opportunity to play arcade games and the easy way

one could copy and swap those games with others - but to think this held

true across the entire playground would be hugely mistaken. In my

experience, the kids who were most obsessive about these new devices were

the ones who would have have been obsessed with any highly technical,

esoteric and largely insular hobby. Those users who were actually doing

any serious programming were a select few; those working commercially even

fewer; and of those, probably only a handful writing code to the level

of the top games. I don’t recall much interest in programming amongst my

peers, but then others sharing that interest in my year may also have been

similarly introverted and quiet, and if so, they were probably wiser than

me in keeping it to themselves (whereas I might as well have been wearing

a “Geek, kick me” t-shirt). A little later, when the school computer room

opened, offering a new source of breaktime respite to those who had

previously concealed themselves in the library, I was the only

member of my year regularly in attendance. There were some younger boys, who

seemed most interested in provoking irritation, and a group of older

know-alls who would pronounce with authority on the subject, some of whom were

even mature enough to talk to girls with passable confidence, which to

me was akin to having a superpower. Not that we saw many girls in the computer

room during breaks - if it hadn’t been for formal lessons, almost none of

them would ever have got near a BBC Micro. (The lessons weren’t available

to the upper years, probably because the school didn’t offer O level

Computer Studies and nobody could be bothered to integrate IT into their

traditional exam curriculae.)

It was through this lab that I first encountered computer networking,

which was initially seen within the industry as a way to share

expensive printers and disk drives across several machines rather than

to allow user communication. Once again, I hit the system

programming guides to see if it was possible to hack into remote BBCs

using Acorn’s ‘Econet’ protocol and make them misbehave, but it seemed it

was insufficiently vulnerable to allow that without collusion. We went

back to distracting Mr M., who was nominally ‘in charge’ of the room, so

we could liberate the floppy disks holding his games collection

(a session on Elite being the main bribe used to get kids to complete

their computer work). The worst that could happen was being identified by

a teacher as ‘someone good with computers’ and therefore liable to being

pressed into service on whatever IT project they had in mind, most of

which were viewed as cringeworthy and twee by those of us who found

ourselves volunteered. It may seem strange and hypocritical that a bunch

of computer geeks could develop any concept of what was ‘uncool’, but

there were definite lines drawn within our little sect. Publishing a

school newsletter: yuk. Hacking the caveman game FRAK! so that he uttered

a similar but different four-letter word: cool.

Weirdly though, a shared interest in computer games, even though I wasn’t

particularly good at or even especially keen on playing them, finally

kickstarted some sort of social life outside of school. Friends would come

over for late evening sessions of Revenge of the Mutant Camels or, even

better, 2-up Double Dragon. (Jetpac, in which the aliens exploded with

short farty noises, was a particular favourite when we discovered that

hammering the pause key during an explosion could draw this out into a

prolonged raspberry, to great hilarity.) This would eventually lead

in sixth form, when we were old enough to get away with underage

drinking, to nights out at the pub instead. (It was at this point that I

discovered the undemanding joys of ‘having a laugh with your mates’, and

computers finally began to take a healthier backseat to everything else in

adolescence - except girls. Plus ça change.)

My last ‘home’ computer was an Amiga A1200, with an internal hard drive,

bought with the seeming riches earned from my first post-graduation job

as the closest thing I could get to the UNIX workstations I used at work - you

could even download a version of C-shell for it (although it wouldn’t run

Minix due to the lack of a Memory Management Unit in the pared-down CPU

used, and believe me I tried). Unfortunately, the hard drive - an

inconceivably expansive 80MB Western Digital model - had an infuriating

habit of locking up when it became warm, making it very frustrating to

use. There was still no widely available Internet access outside of

academia (not that the Amiga supported TCP/IP either, and even on Windows

it was a dreadful hack), and I still didn’t find the time to do any proper

programming. When I moved on to a non-academic job, I bought a cheap

Macintosh and I don’t now recall what happened to that Amiga.

You can always go back

One of the most pleasant surprises of writing this post was the discovery

that it’s (almost) all still around. There are images of all the old games

across various sites that you can download to run under faithful

emulation on the platform of your choice. (Manic Miner on a tiny

smartphone screen? Seems crazy but you can do it. Even Gobbleman is out

there.) There are native cloned

versions of the most popular ones if you prefer that. There are lots of

scanned magazines going back to the earliest days of the hobby, and

PDF scans of complete books. There are even people

still writing games

for these systems today. I suppose working in a modern development

environment must feel a bit like skimming the outermost atmosphere of a

particularly large, murky planet with an unfathomable ecology

beneath, whereas an 8 bit computer is like a decently sized asteroid whose

entire structure you can understand inside and out within a reasonable

time. At this degree of enlargement, one realises fundamentally that

everything a computer does only ever involves, at the most basic level,

adding small numbers together.

Tom Lean’s laudable book brought all this back to me. I pulled up a

few emulators and tried out the old games again. And I’m still useless at

them, and I still can’t be bothered to persist with any but the very

easiest for more than ten minutes. I read a few modern

blog posts

on writing machine code games for these ancient platforms - and quickly

realised that I still have neither the time nor the inclination to go very

far down that rabbit hole. I even finally tried a bit of online

6502 assembly programming.

But what an agreeable Proustian rush it’s been

to look back on those pioneer days. Thanks, Tom.

(All highlighted quotes taken from the book.)

Top Five Games

- Revenge of the Mutant Camels (C64): Although I was a huge fan of Jeff

Minter - whose role in shepherding teenage boys to their destiny of

becoming Pink Floyd fans should not be underestimated - this was the only

Llamasoft game I didn’t find too complex to master. Press fire button,

direct bullets, destroy weird alien attack waves (or leap gingerly over

exploding sheep).

- Paradroid (C64): A beautifully simple concept but with enough going

on to keep you playing, and probably the most elegantly designed game I

ever saw.

- Scuba Dive (Spectrum): Beautiful marine animation, plenty to see and a

slightly gentler pace, involving a lot of carefully timed underwater

maneuvring.

- JetPac (Spectrum): Once the control method is mastered, the

straightforward gameplay of this early Ultimate release made it far more

enjoyable than anything they did later (including the sequel, Lunar

Jetman, which by stark contrast was impossibly hard).

- Double Dragon (Amiga): Laughably poor beat ‘em up with highly silly

sound samples turned into hilarious fun with the addition of a second player

and several piss-taking spectators. Even better, you could - accidentally

or intentionally - twat the other player’s character with a baseball bat.

Honorable mentions: Impossible Mission, Beam Rider, Elite.

Other bubbles

- Extract

from the book, on how the Spectrum inspired creative games.

- Spectrum vs. C64: let’s settle this - you all smell.

]]>

“Fibre Broadband is here”, proclaimed the sign on the BT cabinet next to

the A41 layby grandly. Behind it lay the quietly mouldering remains of the

Cherry Tree Hotel, the multiple voids in its shabby walls exposing its

black heart to an apathetic 21st Century. Really, they had fast Internet?

“Fibre Broadband is here”, proclaimed the sign on the BT cabinet next to

the A41 layby grandly. Behind it lay the quietly mouldering remains of the

Cherry Tree Hotel, the multiple voids in its shabby walls exposing its

black heart to an apathetic 21st Century. Really, they had fast Internet?

If you’re familiar with a certain place BB knows well, you’ll probably

recognise the distinctively ornate and serpentine end of this public

bench, being as it is entirely redolent of only one place. Yes,

this is, of course, located in…

If you’re familiar with a certain place BB knows well, you’ll probably

recognise the distinctively ornate and serpentine end of this public

bench, being as it is entirely redolent of only one place. Yes,

this is, of course, located in…

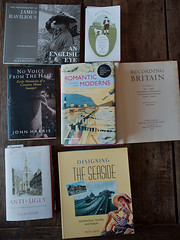

A busy week for our local delivery services. (Still, at least one of them

was a slim paperback with a shipping cost 280 times greater than its sale

price.)

A busy week for our local delivery services. (Still, at least one of them

was a slim paperback with a shipping cost 280 times greater than its sale

price.)